AI-first must be ethics-first and human-first.: For Aman, “AI-first” leadership isn’t about speed or efficiency alone—it means designing organizations where AI is a copilot and humans remain accountable for meaning, context, and moral responsibility, with ethics treated as a core competitive advantage, not a side policy.

Leaders shift from decision makers to system architects and sense makers.: The modern leader’s job is to architect Human-AI workflows—things like “Company Constitutions,” pod-based org structures, ethical tollgates, and red-team prompts—so AI augments strategic reviews, performance management, and HR processes without removing human judgment.

Moral imagination and AI literacy are the real differentiators.: Beyond tools, Aman emphasizes two cultural muscles: AI literacy (promptcraft, experimentation, human-in-the-loop habits) and moral imagination (asking “who else is affected?” and embedding ethics into rituals, metrics, and dashboards) so organizations scale not just intelligence, but integrity.

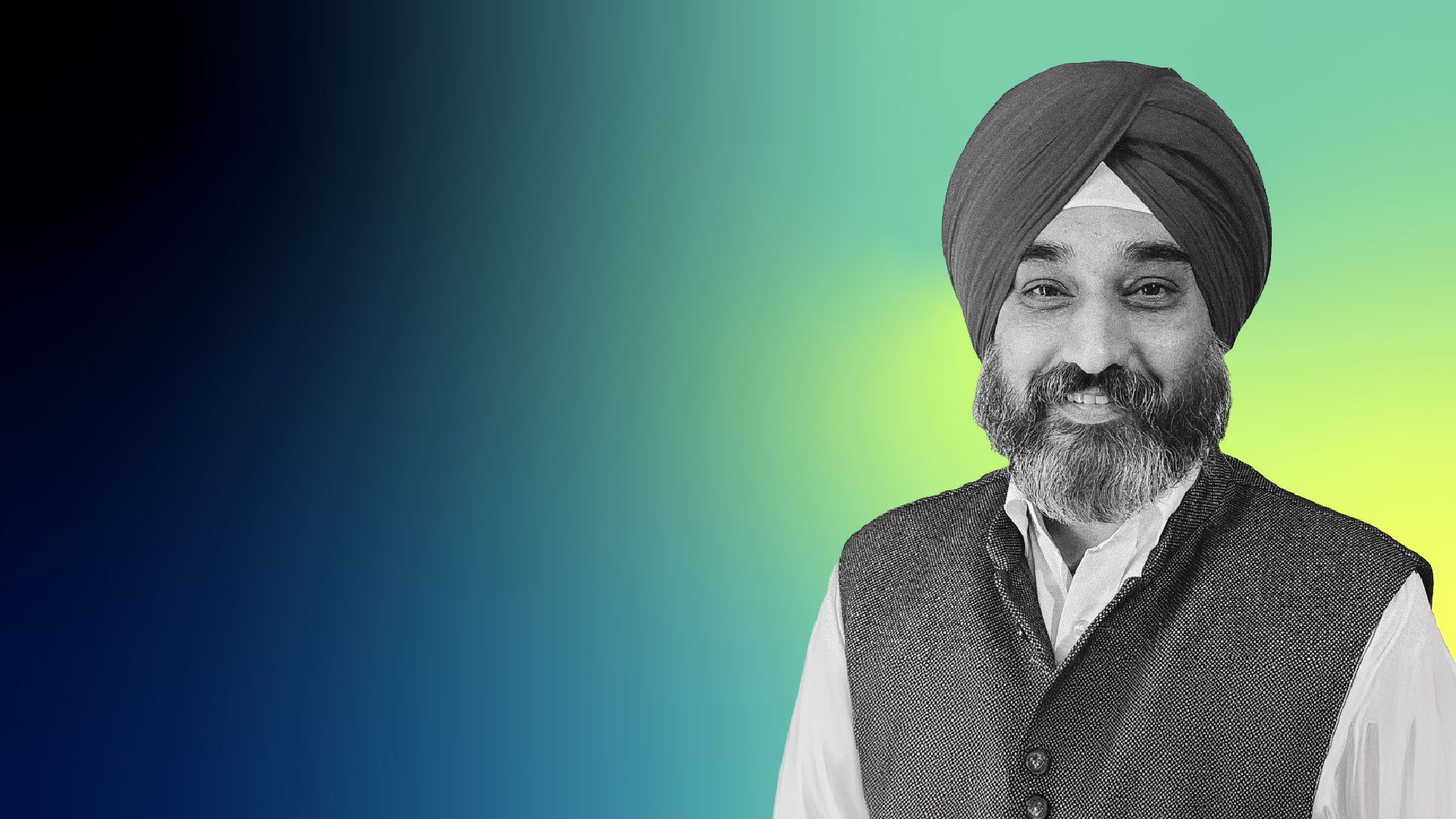

In our interview with Aman, he did a deep dive into HR and leadership workflows in an AI-first world — and how AI must be implemented with a focus on moral responsibility.

Working at the "edge of possible"

My work exists at what I call "The Edge of Possible." My leadership journey began in 1998 as a computer engineer, grounded in the code and architecture of what was then possible. But I quickly realized that the true potential of technology isn't in the circuits, but in its connection to human ambition and market dynamics.

This insight propelled me from engineering into the realms of consulting and growth advisory, where I learned to translate technological capability into strategic advantage. I've always been, at my core, a network builder — a convener of ecosystems. This led me to cofound missions like the India Blockchain Alliance, the India AI Alliance, and the Ethical AI Alliance, not merely as organizations, but as collective attempts to steer powerful technologies toward responsible and inclusive outcomes. My work with The Purpose Coalition further cemented my belief that business growth and social good must be two sides of the same coin.

For years, I've advised global companies seeking to navigate the complex, high-growth landscape of India, helping them align their technological ambitions with local context and global ethics.

So, my leadership path has been a conscious evolution: from architecting systems, to architecting strategy, and finally, to architecting ecosystems. Today, as an advisor for "AI-first" transformations, I guide leaders to see that this isn't just about being first to market; it's about being first to meaning, first to responsibility. An "AI-first" company must, by definition, be a "human-first" and "ethics-first" company.

What it means to be an AI-first leader

The AI-first world is fundamentally recasting the role of a leader. It's a shift from being a decision maker to a sense maker and system architect.

Specifically, my role is no longer just about advising what decision to make, but about designing how decisions are made across the organization. For the SMBs I work with, this is both a massive challenge and their greatest opportunity for leverage.

Concretely, my leadership role now involves:

- Architecting for emergence, not just execution: I help leaders design organizational structures that are fluid and project-based, able to form around problems and dissolve once an AI-driven solution is operational. The long-held assumption of a static, pyramidal org chart is a liability. We're moving towards a "hub-and-spoke" or "pod-based" model where leadership's job is to set the context and provide the tools (especially the AI tools), then get out of the way.

- Shifting from command to curation: The leader's primary value is no longer in having all the answers, but in curating the best questions and the most reliable intelligence. My work involves helping SMB leaders install a 'second brain' for their company—a curated stack of AI tools and data protocols. Their key task is to constantly refine the prompts, challenge the outputs, and ensure ethical guardrails are baked into the process.

- Being the Chief Ethics Officer (de facto): In an AI-first world, every leader must become fluent in ethics. This isn't a soft skill; it's a core competitive advantage and a risk mitigation strategy. My specific role is to make this tangible by helping them build self-regulation frameworks and stakeholder guidelines. We move ethics from a theoretical policy to an operational checklist that is part of every product development cycle and every procurement decision for a new AI tool.

Why AI-first leadership requires unlearning old assumptions

Letting go of long-held assumptions has been critical. I've had to unlearn:

- The assumption of experience as the ultimate guide: In a world changing this fast, a leader's past experience can be a blindfold. I now advise leaders to practice intellectual humility, valuing the data-driven, counter-intuitive insight from an AI model as much as the gut feeling of a seasoned executive. The new leadership muscle is knowing when to trust the algorithm over your own instinct.

- The illusion of control: The traditional leader sought to control information, processes, and people. That is now impossible and counter-productive. My focus is on establishing clarity of purpose and principles—like a constitution for the company. If every employee has a super-powered AI co-pilot, you cannot control their every move. You must trust that they are operating within a well-defined ethical and strategic framework. This is the essence of the self-regulation we build.

- The primacy of the individual genius leader: The heroic CEO making all the calls is an obsolete model. The new leadership is collective and augmented. It's about fostering a symbiotic relationship between human teams and AI systems. My role is to help the leader cultivate this new ecosystem, where their success is measured by the collective intelligence and ethical integrity of their human-AI organization.

How leaders can bridge the gap between AI’s promise and real-world outcomes

The promise sold is one of fully autonomous AI that replaces human effort. The organizational reality is that AI is a powerful, yet brittle, co-pilot that fails without a deeply integrated human feedback loop. In other words, companies expect a 'set-it-and-forget-it' tool, but they get a system that requires more nuanced management, not less.

This manifests in three specific disconnects I consistently see:

- AI models have vast general knowledge but zero innate understanding of your specific business context, your culture, or your client's unspoken needs. An LLM can draft a strategy, but it doesn't know that 'Project Phoenix' failed disastrously two years ago and is a cultural taboo.

- AI can generate output at lightning speed, but organizations lack the parallel governance to validate, approve, and act on that output with the same velocity. This creates a new bottleneck and can lead to either reckless deployment or analysis paralysis.

- The drive for efficiency pushes companies to automate everything. However, without embedded ethical checkpoints, this leads to automated bias, brand damage, and a loss of human accountability.

My approach is to architect for Human-AI symbiosis, not replacement. I build systems where human and machine intelligence amplify each other.

- Designing the context layer: I advise leaders that their most critical strategic asset is no longer just their data, but their contextualized data. We implement what I call a "Company Constitution" — a living document that captures the organization's unique ethics, strategic boundaries, past failures, and brand voice. This isn't just a PDF; it's a dynamic prompt that gets integrated into every major AI interaction, grounding the AI's output in the company's specific reality.

- Implementing friction by design: Instead of trying to eliminate all friction, we design strategic friction. This means building mandatory human checkpoints into AI-driven workflows.

- Evolving org design: I am moving SMBs away from rigid departmental silos towards fluid, project-based "pods." In each pod, the human is the pilot—accountable for the final outcome, setting the mission, and providing the core context. The AI is the copilot — handling data crunching, draft generation, and scenario modeling. This model makes the roles and responsibilities clear: the human leads, the AI assists, and the system is designed for collaboration.

I advise leaders that their most critical strategic asset is no longer just their data, but their contextualized data.

Going back to #2, here are some examples of friction:

- The "red team" prompt: For any AI-generated strategy, a mandatory final step is to prompt a different AI model: "Act as a skeptic. Critique this strategy and list its top 3 potential failure modes." This output is then reviewed by a human manager before final sign-off.

- The "ethical tollgate": In our self-regulation frameworks, we establish clear tollgates. Any AI output that touches customer data, makes a financial recommendation, or involves public messaging must pass through a defined ethical checklist overseen by a human.

How AI transforms strategy, management, and HR workflows

My approach is to target high-leverage, repetitive processes that bottleneck strategic thinking. The overhaul follows a consistent pattern: deconstruct, augment, re-assemble.

Here are three granular overhauls across strategy, management, and AI in HR:

Workflow: Quarterly strategic review and environmental scanning

- Before: A senior leader would spend days manually scraping news, reports, and competitor updates into a sprawling PowerPoint. The process was reactive, incomplete, and heavily biased by their individual focus.

- After: AI-powered strategic radar

- Tools: A combination of web-search-enabled LLMs and data visualization platforms (e.g., Power BI, Tableau).

- The granular overhaul:

- Automated digestion: I built a simple workflow where an LLM is prompted to perform a daily scan: "Based on the top 10 industry news sources, identify three emerging trends, two potential market disruptions, and one regulatory update relevant to [Specific Industry]. Summarize each in 100 words with a source link."

- Structured synthesis: This daily output is fed into a shared database (like Airtable or a simple spreadsheet).

- Dynamic dashboarding: That database is connected to a dashboard tool. Instead of a static quarterly PowerPoint, leadership now has a live strategic dashboard that visually tracks trend velocity, competitor moves, and market sentiment over time.

- Results: Strategic awareness shifted from quarterly and retrospective to continuous and anticipatory. Leadership meetings now start with data-driven insights, not anecdotal hunches.

Instead of trying to eliminate all friction, we design strategic friction. This means building mandatory human checkpoints into AI-driven workflows.

Workflow: Management and performance dashboarding

- Before: Managers wasted hours collating data from siloed systems (Sales, Marketing, Support) into weekly KPI reports. The story behind the numbers was often lost.

- After: Narrative-driven performance intelligence

- Tools: Business Intelligence (BI) Tools + Core LLMs via API or manual input.

- The granular overhaul:

- Data aggregation: KPIs are still centralized in a BI tool (e.g., Power BI, Google Looker).

- The "narrative prompt": Instead of just exporting a chart, we copy the key weekly data (e.g., "Sales up 5%, Customer Support tickets up 15%, Marketing lead volume down 3%") and paste it into an LLM with the prompt: "Act as a business analyst. Analyze this set of performance metrics for the past week. Generate three plausible hypotheses that explain the correlations between these data points. Also, flag one potential strategic risk."

- Results: Managers receive a concise, analytical narrative alongside their data. This transforms their role from data reporter to hypothesis tester, focusing their energy on investigating the AI-generated insights rather than building the report.

Workflow: HR essentials and onboarding

- Before: HR and people managers spent significant time crafting standardized documents like job descriptions, performance review templates, and onboarding plans, often starting from scratch or outdated versions.

- After: The "HR policy copilot"

- Tools: Core LLMs with a library of internal policy documents and cultural principles.

- The granular overhaul:

- Creation: To create a new job description, the prompt is: "Using our company's core values [list values] and the competency framework for [Department], generate a job description for a [Job Title]. Include 5 key responsibilities and 3 required competencies."

- Personalization: For onboarding, the prompt is: "Create a 30-60-90 day plan for a new [Job Title]. The first 30 days should focus on cultural assimilation and foundational knowledge, the next 30 on independent contribution, and the final 30 on strategic projects."

- Results: A 90% reduction in the time spent on drafting essential HR documents. More importantly, it ensures incredible consistency and alignment, embedding the company's culture and strategic intent directly into the foundational people processes.

A practical framework for building AI literacy inside organizations

Building AI literacy is not about creating a team of data scientists; it's about fostering a culture of augmented intelligence. Our goal is to make asking AI as natural and fundamental as searching the web. We've moved beyond one-off training sessions to a continuous, embedded upskilling environment. Here's our framework for building AI literacy:

- Foundation: Demystification and ethical grounding (the "why" and the "what")

- Step: We start not with tools, but with principles. I lead sessions on the 'Ethics of Augmentation,' focusing on responsible use, bias detection, and data privacy. We use real-world scenarios relevant to their roles.

- Goal: To eliminate fear and build a foundation of trust and responsibility. Everyone must understand that AI is a tool for elevation, not replacement, and that its use comes with ethical guardrails.

- Application: Promptcraft and workflow integration (the "how")

- Step: We run hands-on, role-specific workshops. For example:

- For Strategists: "Prompting for Market Analysis and Scenario Planning."

- For HR: "Prompting for JD Creation, Neutral-Sounding Performance Feedback, and Onboarding Plan Generation."

- For Operations: "Prompting for Process Documentation and KPI-to-Narrative Translation."

- Goal: Move from theory to practice. We provide a curated 'Prompt Library' with templates for common tasks, lowering the barrier to entry and ensuring quality.

- Step: We run hands-on, role-specific workshops. For example:

- Advanced: Specialization and innovation (the "what's next")

- Step: We identify and empower 'AI Champions' in each team. These are individuals who show aptitude and enthusiasm. They receive advanced training and become the go-to experts for their department, responsible for refining workflows and spotting new opportunities.

- Goal: To create a self-sustaining, internal engine for innovation and peer-to-peer support.

The behaviors that define an AI-ready organization

An "AI-ready" team or organization is not defined by its tool stack, but by its behaviors and reflexes. It looks like this:

- Reflexive augmentation: The default response to any repetitive or data-intensive task is, "How can we intelligently augment this with AI?" It's a cultural reflex.

- Comfort with iteration: Teams understand that the first prompt is a starting point, not the final product. They are skilled in iterative refinement and critical evaluation of AI output.

- Ethical friction is embraced: Team members feel psychologically safe to question an AI's output and flag potential biases or ethical concerns. This is seen as a core competency, not as obstruction.

- Leadership models the behavior: I and other leaders openly share how we use AI in our own workflows — from drafting communications to analyzing strategy — demonstrating that this is a priority from the top.

The most common challenges teams face when learning AI

The path hasn't been entirely smooth. Key challenges with AI readiness include:

- Initial exposure can lead to paralysis. People freeze, afraid of writing the "wrong" prompt. We overcame this by emphasizing that there is no perfect prompt, only a starting point for a conversation, and by providing the starter templates.

- Early on, there was a tendency to blindly trust and use AI-generated content without sufficient editing, leading to generic or occasionally inaccurate work. This was solved with a human-in-the-loop mandate and creating peer-review checkpoints specifically for AI-generated material.

- Some team members expected AI to solve problems autonomously. We continuously reinforce the "copilot" analogy: The AI controls the stick and the instruments, but the human is the pilot, navigating and making the final call.

In essence, building an AI-ready org is a cultural transformation project with a technological component, not the other way around. It's about weaving a new thread of augmented intelligence into the very fabric of how we think, decide, and create.

How to choose the right AI tool for the right cognitive task

My stack is less a fixed set of tools and more a dynamic, principled ecosystem designed for specific cognitive tasks. I categorize them by function, not brand, as the specific providers can be fluid. My core principle is 'the right intelligence for the right task,' and I advise my clients to think the same way.

Here is the functional breakdown:

1. The orchestration and ideation layer:

- Function: My primary digital workspace for long-form reasoning, complex document analysis, and nuanced strategy drafting.

- Impact: This is my strategic copilot. Its profound impact is in handling massive context windows, allowing me to have a continuous, deep conversation with a document or a problem statement. It excels at maintaining narrative coherence over long outputs, which is indispensable for drafting frameworks, ethical guidelines, and long-term strategic narratives.

2. The agility and execution layer:

- Function: My go-to for rapid prototyping, structured data tasks, code generation, and interacting with diverse data formats (PDFs, spreadsheets, images).

- Impact: This is my operational copilot. Its impact is speed and versatility. When I need to build a quick model, transform data, or get a structured output (like a JSON or a table), this is my first stop. Its plugin ecosystem and multimodal capabilities make it a powerful hub for executing defined tasks.

3. The challenger and contrarian layer:

- Function: A dedicated tool for stress-testing ideas, seeking alternative viewpoints, and engaging in creative, sometimes irreverent, brainstorming.

- Impact: Its impact is in breaking groupthink. I use it specifically to challenge outputs from the other two. By prompting it to "attack this strategy" or "find the weak points in this ethical framework," I institutionalize a necessary devil's advocate in my process. This is a critical component of building robust, self-regulating systems for clients.

The most significant change hasn't been swapping one tool for another, but orchestrating them into a formal workflow. I've moved from using AI tools ad hoc to designing a 'Human-Led, AI-Augmented' pipeline.

For example, a standard workflow for creating a client advisory document might now look like this:

- Drafting in [Orchestration Tool]: For deep, context-rich initial synthesis.

- Validation in [Challenger Tool]: To critique and find flaws.

- Formatting and packaging in [Agility Tool]: To create the final client-ready presentation and supporting data visuals.

How one consultancy redesigned its value chain using AI

I often work with knowledge-based SMBs — let's use the example of a boutique consultancy specializing in market entry strategies. They were experts in their field, but their core service was a major bottleneck.

The problem: Their primary deliverable was a comprehensive strategic blueprint for clients. This required a senior strategist to spend days synthesizing volumes of data — market reports, client interviews, competitor intelligence — into a single, coherent document. It was their highest-value work, but also their most time-consuming, limiting their capacity and scalability.

The pivot: The transformative moment wasn't just in implementing AI tools, but in re-architecting the entire value chain. The goal was no longer to make the strategist faster at their old job, but to redesign the job itself.

The granular, tool-agnostic setup:

- The "inputs" layer: We first systematized the chaos of information.

- Client discovery calls were transcribed using a standard speech-to-text API.

- All market research and PDF reports were consolidated into a single digital repository.

- The "synthesis" layer: The prompt-driven assembly line. This was the core of the transformation. We created a sequenced prompt chain, using a combination of frontier LLMs — I often start with Claude for its nuanced handling of long documents, use ChatGPT for more structured, template-based tasks, and may use Grok for brainstorming contrarian perspectives.

- Step 1: Thematic extraction: The first prompt would be fed the raw transcript: "Act as a qualitative researcher. Identify and list the top 5 strategic priorities and 3 core challenges expressed by the client in this conversation. Present as a bulleted list."

- Step 2: Data correlation: The output from Step 1, along with the market research PDFs, was fed to a different model with a new prompt: "Cross-reference the client's priorities [from Step 1 output] with the attached market data. Identify 3 concrete opportunities and 2 potential risks. Format in a table."

- Step 3: Strategic Drafting: All previous outputs were then synthesized: "Using the identified priorities, opportunities, and risks, generate a draft for a 3-pillar market entry strategy. For each pillar, suggest key objectives and two potential tactical initiatives."

- The "human-in-the-loop" Layer: The AI generated a robust, 80% complete first draft in under an hour. This is where the fundamental cognitive shift occurred. The senior strategist's role was radically transformed from a writer and synthesizer to a curator, validator, and creative amplifier. Their workflow became: Review and validate, inject insight, devise truly innovative tactics with saved time, and tailor to the client's needs.

The results and cognitive shift:

- Operational result: The time to create a flagship deliverable was reduced by over 85%, from several days to a matter of hours.

- Strategic result (the true change): The firm could take on more clients, yes, but more importantly, the quality and depth of their strategic advice improved. The humans were no longer bogged down in information logistics; they were elevated to high-level judgment, creativity, and client relationship building.

The human quality AI can never replace: moral imagination

There is one fundamental question I believe we must all confront: "As we build systems that can think, what is the non-negotiable human quality we must design to never be automated?"

This question moves beyond frameworks and governance into the heart of our humanity in an AI-first world.

My answer is: "Moral imagination."

Moral imagination is the uniquely human capacity to not just follow an ethical rule, but to feel the consequences of a decision for everyone it touches. It's the ability to empathize with stakeholders who aren't in the data set, to care about long-term impacts that won't show up on a quarterly report, and to make a choice that is right, not just one that is rational or efficient.

An AI can be trained on every ethical framework ever written. It can be programmed with flawless rules. But it cannot imagine the quiet despair of a worker displaced without a transition plan, or the fraying of the social fabric when a community is optimized out of existence. It cannot feel the weight of a future we are creating for generations we will never meet.

This is the irreducible core of my work in Responsible AI. It’s not just about installing guardrails; it’s about ensuring that a well of empathetic, compassionate, and courageous human judgment rests at the very center of every autonomous system we create.

Because if we automate everything except the bottom line, we will have optimized our way into a world without a soul. Our ultimate responsibility is to ensure that our intelligence, even as it becomes artificial, remains deeply and irrevocably human.

How to build moral imagination into leadership systems and rituals

I approach moral imagination not as a "soft skill" workshop, but as a strategic operating system upgrade. We install new mental software through three interconnected layers: Mindset, Process, and Systems.

Layer 1: Mindset — cultivating an ethical lens

This is about rewiring how leaders perceive their decisions.

- The "who else?" reframe: We train leaders to automatically append this question to every major decision. Not just "What is the ROI?" but "Who else is affected by this that we haven't considered?" This forces the cognitive leap beyond shareholders and immediate customers to communities, employees, the environment, and future generations.

- From stakeholders to "moral patients": We introduce the philosophical concept of a "moral patient" — an entity that can be harmed or benefited, even if it has no voice in the decision. This expands the map to include non-human life, the data of anonymous individuals, and the integrity of the public discourse.

- Leader as "Chief Ethicist": I reframe their role. Their primary job is no longer just to maximize value, but to steward the ethical integrity of the organization's impact. This is a profound shift in identity and purpose.

Layer 2: Process — installing ethical rituals

This is where moral imagination becomes a repeatable, meeting-based practice. We install specific rituals into the leadership team's rhythm.

- The "pre-mortem of consequences" workshop:

- When: During the planning phase of any major initiative (new product, market entry, large-scale automation).

- How: I facilitate a session with the prompt: "It's one year from today. Our project has failed in the eyes of society. What is the newspaper headline? Write it down."

- Output: Leaders share headlines like: "Local Paper: 'Automated Hiring Tool Found to Systematically Reject Graduates from State Colleges.'" We then work backward to identify the design and data choices that would lead to that outcome and change them now.

- The "moral stakeholder map":

- When: A standard part of any business case review.

- How: Alongside the financial model, we create a visual map. In the center is the decision. We then map all affected parties in concentric circles, moving far beyond the usual suspects to include "competing ecosystems," "future job seekers," and "municipal services."

- Output: A tangible artifact that makes invisible stakeholders visible, forcing a discussion about their potential harms and benefits.

- The "red team ethics" mandate:

- When: For every AI-driven system or algorithm before deployment.

- How: A sub-team or an external advisor is formally tasked with a single mission: "Find the ethical flaws. Attack this proposal not on its business logic, but on its potential for unseen harm, bias, or social erosion."

- Output: A formal "Ethical Risk Assessment" that must be addressed before proceeding, creating a mandatory checkpoint for conscience.

Layer 3: Systems — engineering moral guardrails

This is about baking moral imagination into the very structure of the organization.

- The "ethical tollgate" in project lifecycles: Just as a project must pass a financial review, it must pass through defined ethical tollgates. These are checkpoints with clear criteria (e.g., "Bias audit completed," "Community impact assessment reviewed," "Data provenance verified").

- Incentive structures and OKRs: We redefine success. A leader's Objectives and Key Results (OKRs) now include metrics like:

- "Reduce algorithmic bias in our core product by X% as measured by [specific fairness metric]."

- "Achieve a Y score on our annual 'Trust & Transparency' stakeholder survey."

- This signals that ethical performance is as valued as financial performance.

- The "moral imagination" dashboard: We operationalize ethics by creating a live dashboard that tracks leading indicators of moral risk — e.g., sentiment analysis on employee feedback regarding new tech, fairness scores of algorithms in production, diversity of data sets used for training. This makes the abstract concept of "ethics" a tangible, manageable variable.

The outcome of this implementation is not a team that has all the ethical answers, but a team that instinctively asks better, more profound questions.

They stop seeing ethics as a constraint and start seeing it as the ultimate source of long-term resilience, brand trust, and competitive advantage. They transition from being managers of a business to being stewards of a legacy, architecting organizations that are not only intelligent but also wise and just.

This is the practical path to ensuring that our pursuit of the possible never loses its moral compass.

Key advice for leaders navigating the AI era responsibly

My advice is built on a single, unwavering premise: We are not just adopting a new technology; we are midwifing a new paradigm for human work and intelligence. This requires a fundamental shift in mindset.

For my peers — the advisors, the futurists, the architects of change:

- Transition from solutioneer to sense maker: Our value is no longer in having the proprietary methodology or the secret playbook. It is in helping our clients make sense of the chaos. Stop selling "AI solutions" and start guiding them through the process of 'asking the right questions of the future.' Your role is to be a translator and a guide at the frontier.

- Embrace intelligent orchestration: You don't need to be the master of every tool, but you must become a master of orchestrating them. Build a meta-skill in designing human-AI collaborative workflows. Your new expertise is in designing the symphony, not just playing a single instrument.

- Champion the ethical core: In a world rushing toward efficiency, be the unflinching voice for responsibility. Integrate ethics directly into your frameworks and proposals — not as a separate chapter, but as the foundation. This is no longer a niche; it is the core differentiator for sustainable, trustworthy transformation.

In a broader sense, my advice to all leaders is this:

- Lead as a curator of context, not a controller of process: Your most critical role is now to define and constantly refine the "Company Constitution" — the purpose, values, and strategic boundaries within which your augmented teams and AI agents will operate. You provide the "why"; the technology figures out the "how".

- Invest in friction, not just flow: The greatest risk is not slow progress, but unchecked, automated failure. Intentionally build 'ethical tollgates' and 'red team' prompts into your workflows. Mandate that every AI-generated strategy must be critiqued by a different AI. Slow down to validate, so you can scale with confidence.

- Model intellectual humility, not omniscience: The most powerful thing a leader can say today is, "I don't know, but let's task our AI co-pilot to explore the possibilities with us." By demonstrating how to question, refine, and collaborate with AI, you create a culture of learning and augmentation, not one of fear and replacement.

Follow along

You can follow Aman as he continues to explore ethics and efficacy in AI leadership by connecting on LinkedIn. And don't miss his work at:

More expert interviews to come on People Managing People!