Trust Issues: Americans fear AI misuse by leaders more than AI itself, underlying deeper trust issues.

Workplace Sentiments: Workers evaluate AI by leadership actions and past power misuse, not technical merits.

EU Comparison: Europeans share AI anxieties but benefit from regulatory frameworks unlike U.S. workers.

Employer Trust: U.S. employers are trusted more than other institutions, but trust remains fragile and uneven.

Leadership Strategies: Successful AI adoption requires transparency and involvement from all employee levels.

The more I talk to people, regardless of what industry they're in, the more one thing becomes clear. Americans aren't afraid of AI, or technology in general. They're afraid of what people in charge will do with it.

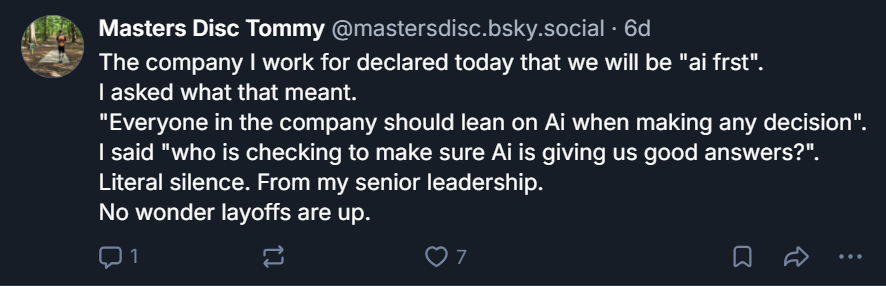

That distinction matters more than any benchmark or product launch, because it reframes the entire conversation about AI adoption in the workplace. The dominant narrative treats resistance to AI as a literacy problem, something that better training and sleeker onboarding can fix. But the data tells a different story.

The anxiety gripping American workers has to do with decades of broken promises from the institutions that employ them, govern them, and claim to represent their interests.

The 2025 Edelman Trust Barometer places the United States among the five least-trusting large economies in the world, with a Trust Index score of 47 out of 100. Japan, Germany, the UK, and France round out that group.

Sixty-one percent of global respondents report a moderate or high sense of grievance, defined as the belief that government and business serve narrow interests while regular people struggle. In the U.S., the picture is sharper: three times as many Americans reject the growing use of AI as embrace it, 49% to 17%.

Those numbers don’t describe a population confused by technology. They describe a population that has watched corporate leaders overpromise and underdeliver for a generation, and now sees AI as the next vehicle for that cycle.

The workplace data reinforces this. Deloitte’s TrustID Index tracked a 31% decline in trust in company-provided generative AI between May and July of 2025. Trust in agentic AI, systems that can act independently rather than just recommend, collapsed by 89% in the same window.

Workers aren’t evaluating the technology on its technical merits. They’re evaluating it based on who controls it and what those people have done with power in the past.

Edelman’s AI-specific flash poll, conducted across five nations in late 2025, found that employees are 2.5 times more motivated to embrace AI when they feel their job security is increasing. Two in three AI distrusters said adoption feels forced, not voluntary. And when asked who they trust to tell the truth about AI, workers chose peers over CEOs or government leaders by a factor of two.

That last finding is the quiet indictment. Workers don’t want to hear about AI from the C-suite. They want to hear about it from someone who sits next to them and has nothing to gain from spinning the message.

A Different Kind of Fear

Compare this to Europe. Europeans are not especially enthusiastic about AI either. Germany and the UK both sit in the bottom tier of the Trust Index. But European workers operate inside a fundamentally different structure. Some key differences:

- The EU AI Act regulates high-risk AI applications in employment

- Works councils give employees a formal voice in how technology gets introduced

- Countries like Germany and France require consultations before mass redundancies

- The European Trade Union Confederation is pushing for a dedicated directive on algorithmic systems at work, including mandatory human oversight of AI-driven decisions and collective bargaining rights around deployment.

The EU is also building toward what policy advocates are calling an “AI Social Compact,” a framework tied to the European Social Fund designed to support workers through income assistance, reskilling, and job transitions during AI-driven disruption. The existing European Globalisation Adjustment Fund already provides a model, albeit underfunded, for helping displaced workers land on their feet.

None of this means European workers are relaxed about AI. But their anxiety takes a different shape. They worry about surveillance, data privacy, corporate overreach. They channel concern through regulatory frameworks and collective bargaining structures that, however imperfect, give them a seat at the table. When a German worker hears that AI might reshape their role, they can reasonably expect that someone in government or in their union has at least considered what happens next.

American workers have no such expectation. The stakes are structurally higher in the U.S., where healthcare is tied to employment. Losing a job doesn’t just mean losing income. It means losing the safety net entirely.

In the United States, at-will employment is the default. Unionization rates hover near historic lows. There is no federal framework governing AI in the workplace. When an American worker hears that AI might reshape their role, the reasonable question is: and then what? Who catches me?

The answer, increasingly, is nobody. And workers know it.

The Employer as Last Resort

This is where the story gets complicated for leaders reading this piece. Even as trust in government and media craters, employers remain the most trusted institution in the U.S.

McKinsey’s 2024 employee survey found that 71% of workers trust their own employer to deploy AI ethically, a higher rate than they gave to universities, large tech companies, or startups. Edelman’s data shows 66% of employees trust their employer to do what’s right. That number held across age, gender, income, and political lines.

But that trust is fragile. Globally, employer trust dropped 3 points in 2025, the first meaningful decline since employers took the top spot in 2021. And the trust is unevenly distributed. Edelman found a 13-point gap between workers in the lowest and highest income quartiles. Sixty-five percent of Americans in the bottom income quartile believe they will be left behind by AI. Half of the middle-income respondents feel the same way.

Whitney Munro, CEO and Founder of FLEX Partners sees this playing out in real time. She says:

In the organizations we advise, AI adoption outcomes across sectors correlate almost perfectly with pre-existing trust levels between leadership and staff.

Where employees felt they were communicated with honestly during previous periods of change, they’re more optimistic and willing to give AI a chance. Where the opposite occurred, decisions made in a vacuum with no communication, AI becomes what she calls “a lightning rod of conflict.”

That’s when employees start asking whether the rollout is really about productivity or about cutting headcount, and they start feeling expendable.

The people caught in the middle of that tension are the VPs, directors, and senior managers tasked with carrying the message down.

Kelly Meerbott, a leadership coach, CEO of You Loud and Clear, and a TEDx speaker on psychological safety, describes them as “human shock absorbers” between the C-suite’s priorities and the workforce’s lived reality.

They are exhausted," she says. "From having to translate executive talking points into something their teams can actually believe. And when those middle leaders don’t believe the message themselves, the entire chain of trust collapses before AI ever reaches the frontline worker.

How a CHRO or COO handles that tension in the next two years will define whether AI becomes a trust-building exercise or the final breach.

The Edelman data offers a clue about what works. Peer-to-peer communication about AI is roughly twice as effective as top-down messaging. Voluntary adoption outperforms mandated rollouts. And the single most powerful driver of AI trust is personal experience, specifically, the experience of AI actually helping someone do their job better.

Which means the path forward is less about strategy decks and more about the daily experience of work. Does the person on the warehouse floor or in the regional sales office believe that the AI tool their company just rolled out, whether for daily tasks or performance evaluation processes, is there to make their life better? Or do they believe it’s there to build the case for eliminating their position in eighteen months?

The technology doesn’t answer that question. The relationship between worker and employer does. And in a country where institutional trust has been declining for decades, that relationship is carrying weight it was never designed to bear.

Trust Follows People, Not Platforms

The research points to a pattern that should give leaders some direction. Trust in AI doesn’t start with the AI. It follows an older, more human logic.

People adopt what they feel safe enough to explore. They explore what someone they trust has already tried. And they commit when the experience confirms what they were told, that this tool is here to help them, not replace them.

That sequence is worth studying, because it runs counter to how most companies approach a technology rollout. The standard playbook is top-down: executive announcement, company-wide training, adoption metrics, performance integration.

Every step in that sequence reinforces the power dynamic that workers already distrust. It tells them AI is happening to them, not with them. In an environment where two-thirds of resisters already feel AI is being forced on them, that playbook isn’t just ineffective. It’s accelerating the problem.

David Kolodny, co-founder of the startup studio Wilbur Labs, which has built and invested in over 21 technology companies since 2016, sees this from the builder’s side.

One strong opinion I have from building with automation for so long is that the fundamentals still matter just as much as they always have,” he says. “Automation requires thoughtful setup, rigorous QA, and careful ongoing monitoring.

The rush to deploy, in other words, is where trust goes to die. “It’s an order of magnitude easier to break trust than build it up,” Kolodny adds, “and that’s why there are so many individuals who are tepid about the technology.”

Munro argues the shift has to be more fundamental than pacing.

You cannot roll out AI as a tech deployment,” she says. “It has to be cultural.

That means leaders have to take some pretty clear actions:

- Explicitly stating what AI will and will not be used for.

- Clarifying whether workforce reductions are part of the long-term strategy, rather than letting employees fill the silence with their worst assumptions

- Involving teams in early testing so they’re part of the process, and being honest about the trade-offs rather than celebrating productivity gains that workers suspect come at their expense.

- Check your actions to align with values as it relates to surveillance. Using AI for “gotcha” monitoring will turn employees away immediately and confirm every fear they already had.

What the trust data suggests underneath all of this is almost deceptively simple. Start small. Let people opt in. Find the workers who are genuinely curious, not the ones performing enthusiasm for management, and give them room to experiment without scorecards.

When those people come back and tell their colleagues that a particular tool saved them two hours on a report or helped them catch a pattern they would have missed, that testimony carries more weight than any keynote because it comes without an agenda.

The Case for Uncertainty

Leaders in the C-suite also have to be willing to say what they don’t know. The Edelman data found that two-thirds of workers in developed nations believe business leaders won’t be fully honest about AI’s impact on jobs. That expectation of dishonesty is already baked in.

The only way to disrupt it is radical transparency about what AI will change, what it won’t, and where the company genuinely doesn’t have an answer yet. Meerbott puts it more directly.

Tell other leaders you’re confused, or don’t have the answer,” she says. “Shock them with your humanity.

That advice cuts against decades of executive conditioning, but it maps precisely to what the trust data reveals. Most executives are trained to project certainty. In this environment, certainty reads as spin. Admitting uncertainty, paired with a visible commitment to figuring it out alongside employees rather than above them, is a more credible posture than any polished transformation narrative.

American AI anxiety is a cultural artifact, the product of a society that has systematically eroded the structures that help people feel safe during periods of disruption. Europe built guardrails before the car started moving. The U.S. is asking workers to trust the driver while the car accelerates, even though the driver’s track record is, at best, mixed.

I don’t think Americans are inherently more afraid of AI than their counterparts,” Munro says. “I just think we are more skeptical of power structures that haven’t consistently demonstrated trust and reciprocity.

That skepticism didn’t come from nowhere. It was earned. And for CHROs and COOs navigating this moment, it is the operating environment.

Every AI rollout, every automation decision, every workforce restructuring is being filtered through a trust deficit that existed long before anyone typed a prompt into ChatGPT. Acknowledging that deficit is the first step. Closing it is the work of the next decade.