Deployment Drop: AI agent deployment has declined, revealing a critical shift in organizational focus towards infrastructure.

Investment Shift: Despite a drop in deployment, investment in AI continues to rise, with budgets allocated strategically towards long-term goals.

Transformation Focus: Successful organizations prioritize workflow redesign and data readiness over mere AI technology implementation.

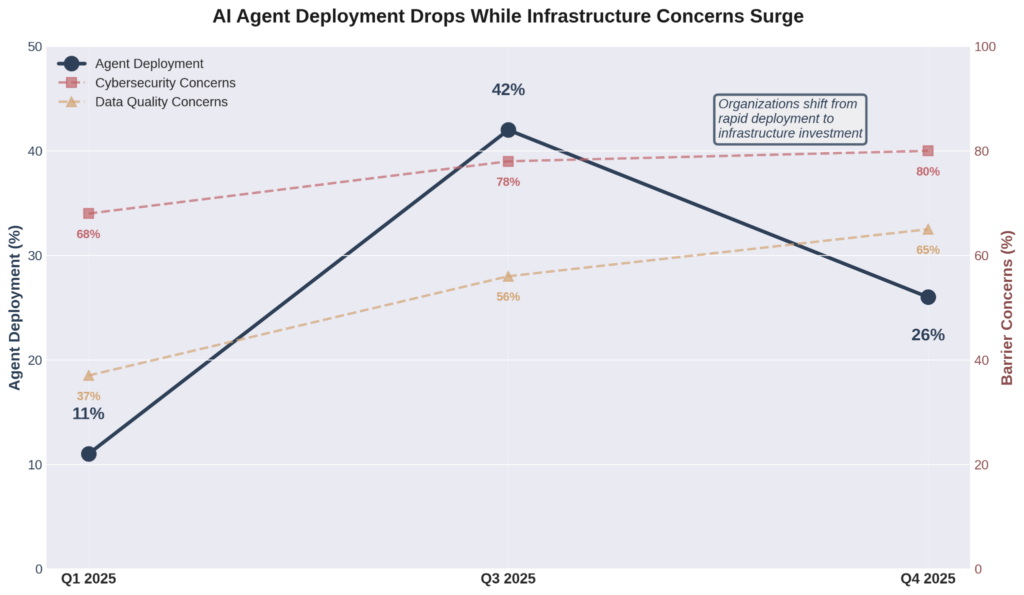

When KPMG reported that AI agent deployment fell from 42% to 26% between the third and fourth quarters of 2025, most analysts missed the point. They saw retreat. I see the first honest signal in two years of AI hype.

The paradox is sharp: 67% of CEOs now expect returns on AI investments within one to three years, shortened from the three-to-five-year horizon anticipated just 12 months earlier.

Investment continues to climb with 69% of executives allocating 10-20% of their total budgets to AI over the next year. CEO confidence in the global economy sits at a five-year low, yet AI spending accelerates.

Then why are deployment numbers dropping?

Because executives are waking up to a reality the tech press has been reluctant to examine: pilot programs predict almost nothing about production success. Organizations that rushed to demonstrate AI capabilities in 2024 are now facing the consequences.

According to multiple industry surveys, 46% of AI proof-of-concepts were scrapped before reaching production in 2025. That's double the abandonment rate from just one year prior.

The companies pulling back aren't giving up. They're getting serious.

From AI Theater to Infrastructure Reality

KPMG's interpretation of their own data contradicts the surface-level reading. Their analysts observe that leading enterprises are professionalizing agent systems rather than abandoning them.

Investment and engineering capacity have shifted toward production-grade, orchestrated agents with proper governance, monitoring, security, and integration. The work looks less impressive in quarterly reports but sets the stage for systems that actually function at scale.

This distinction matters because most organizations still don't understand what separating AI theater from genuine transformation requires. The barriers they're hitting aren't technical curiosities, they're fundamental infrastructure gaps:

- Data quality: Jumped from 37% to 65% of leaders citing it as critical in a single year

- Cybersecurity: Now identified by 80% as the greatest obstacle to AI strategy goals, up from 68%

- System complexity: 65% cite agentic system complexity as their top barrier for two consecutive quarters

- Investment shift: Half of executives now plan $10-50 million specifically for securing agentic architectures and hardening model governance

These aren't the spending patterns of organizations chasing the next demo. These are the economics of infrastructure.

The 88% Problem

The pilot-to-production gap has been documented repeatedly, but rarely confronted honestly.

Research shows that for every 33 AI prototypes a company builds, only four make it into production. The failure rate for scaling AI initiatives runs approximately 88%, more than double the failure rate of non-AI technology projects.

In 2025, S&P Global Market Intelligence found that 42% of companies abandoned most of their AI initiatives, a dramatic spike from 17% the previous year.

The pattern is consistent across industries: adoption is easy, pilots proliferate, but integration remains rare and production deployment with measurable outcomes proves vanishingly scarce.

What Separates Theater from Transformation

What separates the organizations that stall from those that scale isn't model sophistication or computing power. McKinsey's 2025 AI survey confirms that organizations reporting significant financial returns are twice as likely to have redesigned end-to-end workflows before selecting modeling techniques.

The winners start with value pools rather than use cases, asking where they lose the most margin or time rather than what AI can technically accomplish.

Air India's virtual assistant AI.g, which now processes over four million queries with 97% automation, emerged from a specific constraint: their contact center couldn't scale with passenger growth. They didn't build it to prove they could deploy AI. They built it because routing routine queries through humans was breaking their operation.

The difference is philosophical as much as operational. Organizations treating AI as technology implementation focus on model performance and inference speeds. Organizations treating AI as business transformation focus on data readiness, workflow redesign, and the division of labor between humans and machines.

The first group launches pilots that look good in board presentations but never escape the lab. The second group ships products.

This distinction explains why data infrastructure now consumes 50-70% of AI budgets and timelines in successful deployments, a complete inversion of typical spending ratios.

It explains why 72% of organizations plan to deploy agents exclusively from trusted technology providers rather than building custom solutions and it explains why the most sophisticated executives are slowing down rather than speeding up.

Why Pulling Back Is the Smarter Play

The deployment numbers dropping should be read as maturation, not failure. Rushing forward with pilot after pilot is simply not the way to lead on AI implementation. Spelled out? Stop optimizing for internal presentations rather than operational reality.

The real inflection point arrives when executives stop asking whether AI delivers ROI and start asking whether their infrastructure can support what AI requires. That shift separates the organizations positioning for what comes after from those that will spend 2026 explaining why their 2024 demos never materialized into production value.

If there’s one bit of parting advice I can provide, it’s to stop focusing on the volume of AI initiatives or attempted agent deployments and start building the governance frameworks, data pipelines, security architectures, and integration standards that allow each new agent to strengthen the system rather than add fragility. That work rarely generates headlines. It doesn't demo well. But it's the only work that matters.

When KPMG reports deployment numbers rising again in two quarters, we'll know which organizations used the pause to build foundations and which ones just kept running pilots.