360-degree feedback, also known as multi-source, multi-rater feedback or 360 review, is a method of gathering feedback from multiple sources to aid in employee evaluation and development.

With the help of 360-degree feedback software, it's possible to collect rich, development-focused feedback about each employee in your organization.

While there are some key considerations around the use of this appraisal method, in general I’m a fan.

Here I’ll help you understand what 360-degree feedback is, why it's valuable, what not to use it for, and how to run a 360-degree feedback process.

Quick Ask: Take our 60-second survey to share how you really feel about using AI agents in performance reviews, promotions, and coaching. Your input helps us better understand the real challenges HR teams face with AI in performance management.

What is 360-Degree Feedback?

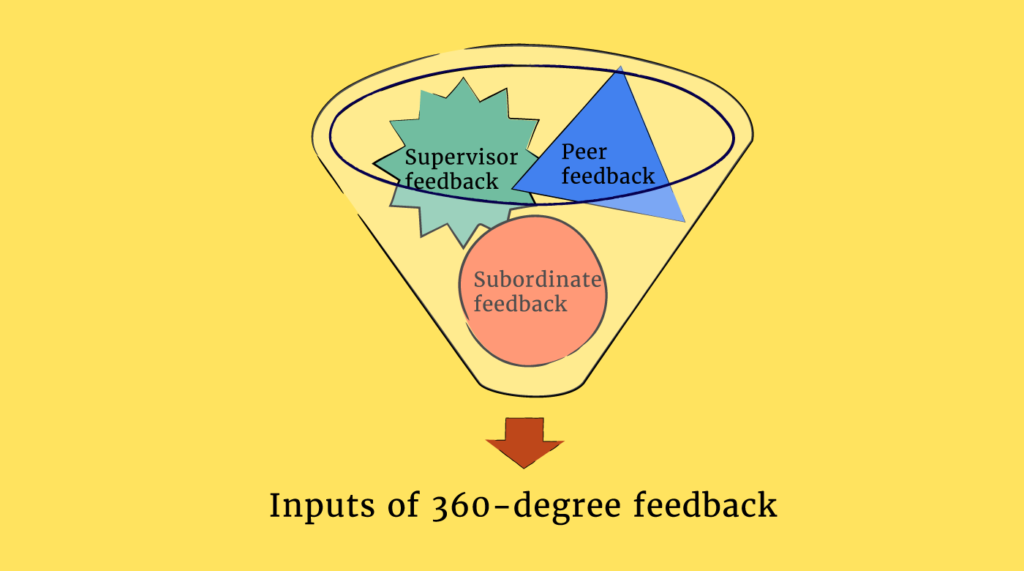

360-degree feedback is a performance evaluation method in which a team member receives feedback from multiple sources, including supervisors, peers, subordinates, and sometimes even customers.

The idea is that, by incorporating the different perspectives of those that work most closely with someone, 360-degree feedback provides a well-rounded view of an individual's strengths and areas for improvement.

This kind of feedback is typically used for personal development, to supplement performance reviews, and to provide upward feedback such as leadership assessments/manager reviews, rather than as a basis for making decisions about promotions or salary increases.

What 360-degree feedback is useful for (and not)

How 360-degree feedback works

360-Degree feedback example

360 Degree Feedback Pros And Cons

While 360-degree feedback can be useful for boosting performance, there are some potential downsides to consider.

| Pros | Cons |

| More comprehensive feedback | Bias and inaccuracy |

| Increases self-awareness | More resource intensive |

| Enhances team collaboration | Misuse for performance evaluation |

| Builds a feedback culture |

360-degree feedback pros

Comprehensive feedback

Involving feedback from multiple sources (e.g., peers, supervisors, subordinates) offers a more well-rounded evaluation of an employee's performance.

It captures different perspectives on their work, making the feedback richer and more diverse than a single-manager review.

Increases self-awareness

Employees receive diverse perspectives on how they’re viewed in the organization and their behavior impacts others, fostering greater self-awareness and personal growth.

Enhances team collaboration

Encouraging feedback from peers and direct reports promotes a culture of open communication, trust, and collaboration across teams.

Builds a feedback culture

Regularly practicing 360-degree feedback helps cultivate a workplace that values constructive feedback, continuous performance management and improvement, and accountability.

Potential cons of 360-feedback

Bias and inaccuracy

Feedback can be based on a lack of knowledge or influenced by personal biases, favoritism, or conflicts, which may result in unfair or inaccurate evaluations (although it’s hoped that garnering multiple perspectives will mitigate this bias).

This is why it’s important to carefully select participants and to have a clear, well-explained feedback system. This brings us onto...

More resource intensive

Designing, gathering, analyzing, and delivering 360-degree feedback requires extra time and resources, which can strain both the feedback providers and the HR team. However, 360-feedback software and performance management tools can help.

Misuse for performance evaluation

When used as a performance rating tool instead of a developmental tool, 360 feedback can create anxiety and reduce openness, limiting its effectiveness as a developmental tool.

How To Run A 360-Degree Feedback Process

It’s mentioned briefly above that effective 360-degree feedback requires careful planning and certain conditions need to be met.

Here’s how to run a successful 360-feedback process that will be as fair and accurate as possible.

Step 1: Identify and communicate the purpose

For the 360-degree feedback process to work, it’s necessary to educate all team members on how the process facilitates professional development and clearly articulate if it is tied to formal appraisal and compensation.

All participants should understand the benefits of feedback more generally and the benefits of using a multisource feedback process for development.

They should also know what the process looks like in practice, and understand how the results from the process will be used.

A communication plan should include other important features of the 360-degree feedback process like confidentiality, anonymity, process timeline, and the kinds of things included in the feedback survey.

Step 2: Identify who will provide input

WARNING: This step is high-risk!!

This is where 360-degree performance evaluations go from helpful to completely unhelpful and biased.

When an organization begins preparing to conduct 360-degree feedback, managers often ask their employees to identify a handful of people that they feel would be able to provide feedback about their performance at work.

This is where the entire process can be derailed. When you ask people who should provide feedback about them, you immediately invite significant bias into the evaluation process.

Instead, managers should seek to identify a handful of people that each of their employees actively collaborates with at work. Who do they serve? Who relies on them for effective work outcomes? Who are their stakeholders? Who is impacted by their work?

If the feedback recipient, for example, is a Customer Experience Manager with aspirations to continue growing within the organization, valuable sources of feedback may include:

- The Customer Experience Manager (self-review)

- Members of this manager’s team

- The Customer Experience Manager’s manager

- Peer Customer Experience Managers

- Customer feedback (especially in cases where this manager directly interacts with specific customers)

- Other individuals/departments from across the organization with whom this manager interacts somewhat frequently (i.e., sales, operations, and HR).

Step 3: Define what gets evaluated

The third step in soliciting and administering 360-degree feedback is to define the relevant areas of performance the feedback is meant to address.

These performance dimensions should be derived from current job analyses, or based on senior management’s beliefs about the behaviors they want to develop and reward in the future.

A helpful question to ask yourself when defining these dimensions is “What behaviors should we expect from a high performer in a given competency, and how often should we expect these behaviors?”

In the case of the Customer Experience Manager from Step 2, we might consider performance dimensions related to team management and the performance of the whole department.

We might also consider behaviors we would expect from a high-performing Customer Experience Manager preparing for an increase in the scope of their responsibilities.

It is important to note that ratings are based on evaluations (e.g., 1 = Poor Performance, 5 = Excellent Performance) or on the frequency of behavior (e.g., Never does this, Sometimes does this, Always does this, etc.).

Step 4: Decide on how feedback is measured

The fourth step in preparing to deliver 360-degree feedback involves the design of the multisource feedback process.

This covers the survey’s scale format (how behaviors and performance are measured) and the availability of commentary to supplement ratings.

A commonly used format is the Likert scale, which asks for a rating on a set of performance dimensions on a numeric scale e.g. 1-5 (1—strongly disagree, 6—strongly agree) as this is simple but provides enough flexibility for the rater to distinguish between merely average performance and high performance.

In the case of the Customer Experience Manager, let’s assume a number of the items asked on the feedback survey relate to this manager’s leadership. We’ll use a 6-point Likert scale like so:

- Completely disagree/Hardly Ever

- Disagree/Mostly not

- Somewhat Disagree/Usually not

- Somewhat Agree/Usually yes

- Agree/Most of the time

- Strongly Agree/Almost Always

Usually, 360-degree feedback presents different questions to different reviewers, based on their relationship to the person being evaluated.

Often the split is by whether someone is a direct report of the person being evaluated, if they are a customer of the person being evaluated, if they manage the person being evaluated, or if they are a co-worker peer to the person being evaluated.

Items one might expect on the survey could include:

- This individual supports me in meeting my personal and professional goals (DR)

- This individual provides helpful, ongoing feedback to help my performance (DR)

- This individual is responsive to my requests when I need to escalate a concern (CX)

- This individual takes time to support their direct peers and help mentor new managers (PR)

- This individual contributes value to team meetings (MGR, PR)

- This individual demonstrates accountability for their teams’ results (MGR)

In addition to submitting numerical ratings on the feedback form, it could be helpful to include room for additional commentary or feedback. This is so raters can provide specific information leading to the score or offer feedback not measured on the survey.

Step 5: Analyzing feedback data

Once surveys have been submitted, the data can be compiled, organized, and analyzed.

One way of doing this is to provide the recipient with normative data to compare their results with summary data of other participants in the process (i.e., the average scores across the items measured by the other Customer Experience Managers who were rated).

However, while this might be motivational in some highly competitive teams and cultures, be careful not to discourage people too much when they compare their scores to those of their peers. It can backfire, so read the situation carefully!

The various feedback sources can be separated by source type or relationship to the reviewee. This split can be very helpful when used as a basis for comparison. Knowing these differences could prove very insightful for the person receiving the feedback.

Step 6: Deliver the feedback

The sixth step in implementing and using a 360-degree feedback process involves the delivery of said feedback. There are a couple of considerations relating to feedback delivery:

- The shape and feel of the delivery itself

- Who gets to deliver it.

How to deliver feedback

Feedback ought to be objectively and holistically explained and internalized so that the recipient pays attention to both areas in which they ranked more highly and areas noted for improvement.

One way to do this is for the feedback deliverer to support the recipient in identifying whether areas for improvement (i.e., the presence of undesirable behaviors or the absence of desirable ones) highlighted in the feedback summary are related to motivation or ability.

To address areas for improvement stemming from motivational issues, feedback can involve the inclusion of rewards to reinforce the desired behavior change.

To address areas for improvement related to ability, constructive feedback might involve training, mentorship, or other ways that encourage the recipient to learn how to behave in the desired way.

Some useful resources here include how to give feedback and negative feedback examples.

Who should deliver feedback?

The next concern consists of selecting the person (or people) delivering the feedback.

Who, then, should deliver the feedback collected and serve as this support source? Here are several potential options:

- A hired coach, consultant, or HR Business Partner. Using a consultant may help minimize perceived risk to the recipient and allow the recipient to consider the feedback in full.

- Immediate supervisor. This requires that the supervisor review the feedback objectively and tie the survey results directly to tangible steps toward improvement.

- Group sessions. Feedback recipients can receive their results and have a general discussion on how to use the results and seek clarity on results and what to take away from them.

Performance evaluation software can also be utilized to help you analyze and administer more effective feedback.

Step 7: Supporting development

Throughout this article, I've focused on using 360-degree feedback for developmental purposes (rather than performance).

Therefore, it’s imperative that the recipient co-create and commit to a series of actions supported by the recipient’s team, manager, and organization that aid their professional development.

Here are tips and techniques one can use to support multisource feedback recipients in making progress toward their commitments to growth.

Collaborate on next steps

The deliverer of the feedback ought to work with the recipient on identifying the next steps based on the collected feedback data. This can include:

- Planning for future check-in meetings to discuss progress (with any of the relevant stakeholder groups, as appropriate)

- Identifying sources or opportunities for the recipient to learn more about and practice behaviors and skills they would like to gain or strengthen

- Having the recipient explain specific ways they intend to make the relevant adjustments

- Identifying timelines and milestones to measure progress.

Summarize key takeaways from the feedback session and action items

Have the recipient help you summarize the actions and timelines they have agreed to.

It would be helpful for this information to be made readily available to the recipient and any relevant stakeholders (e.g., their direct supervisor) for whom it makes sense to follow up on these action steps.

Performance management software with this functionality would help secure the survey data while offering easy access.

360-Degree Feedback Best Practices

I’ve covered quite a lot above so thought I’d summarize some best practices to bear in mind when running your 360-degree feedback process.

- Define clear objectives: Establish clear reasons for using 360-degree feedback. Whether it’s for personal development, team improvement, or performance reviews, clarity will guide both participants and reviewers.

- Select relevant competencies: Choose competencies that align with your organization’s values, culture, and goals. Focus on behaviors that are observable and impactful in the employee’s role.

- Ensure confidentiality: Guarantee anonymity to the reviewers, which encourages honest and constructive feedback. Using an external platform can help preserve confidentiality.

- Train participants: Educate employees on how to give and receive feedback constructively. This will minimize misinterpretations and promote a supportive environment.

- Encourage balanced feedback: Instruct reviewers to provide balanced feedback that includes strengths, areas for improvement, and specific examples.

- Focus on development over evaluation: Use 360-degree feedback primarily for developmental purposes rather than direct performance evaluation. This approach reduces anxiety and helps employees embrace feedback as an opportunity for growth.

- Provide structured follow-up: Design follow-up sessions to discuss the feedback, set development goals, and create action plans. Support from managers or mentors can be instrumental in achieving these goals.

360-Degree Feedback Template

Use this template to help you collect 360-degree feedback in your org.

Get our 360-Degree Feedback template!

360-Degree Feedback FAQs

What is an example of 360-degree feedback?

A common example is a manager receiving feedback from their direct reports, peers, and supervisor on their communication, leadership, and teamwork.

For instance, peers might highlight collaboration strengths, while direct reports could suggest clearer goal-setting. Combining these perspectives helps managers spot consistent patterns and target specific development goals.

How do I implement 360-degree feedback in my organization?

Start by clearly explaining the process to employees and securing leadership buy-in. Choose focused, relevant questions, pick a reliable feedback tool, and ensure all participants understand the value of honest input and set clear timelines.

After collecting feedback, provide practical guidance for employees to interpret results and plan next steps.

What are common challenges with 360-degree feedback and how do I overcome them?

Typical challenges include vague feedback, resistance from employees, and concerns about confidentiality. Address these by training participants on giving constructive input, communicating the purpose clearly, and offering support for people to help process results and follow up with development actions.

To address confidentiality concerns, explain to everyone involved that individual comments remain private. Reinforce confidentiality at every stage to build trust in the process.

How often should I run 360-degree feedback cycles?

Most organizations run 360-degree feedback annually or biannually. This timing gives employees enough time to act on feedback and show progress without overwhelming them. Choose a frequency that fits your performance review schedule and organizational needs, and always allow enough time for follow-up development.

Can 360-degree feedback be used for promotions or compensation decisions?

It’s possible, but not recommended. Most HR experts advise using 360-degree feedback mainly for development, since feedback can be subjective.